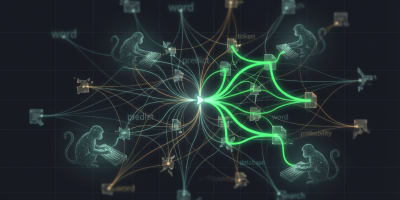

How do language models understand language?

Co-Founder / CTO

Co-Founder / CTO

Co-Founder / CTO

Co-Founder / CTO

Co-Founder / CTO

Co-Founder / CTO

Co-Founder / CTO